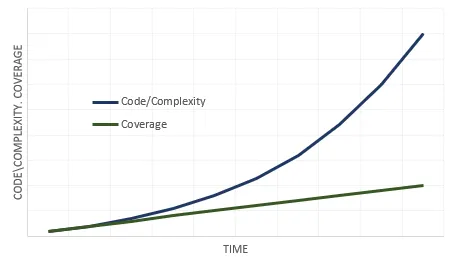

The pace of digital transformation is faster than ever before. With it bringing increasing software complexity. There is need to build, deliver software versions at speed while maintaining quality.

With exponential increase in software complexity there is a high chance of linear increase in software testing coverage creating a quality risk for the product.

Different methods like acceptance test drive development (atdd), continuous integration, pair programming have been shifting software testing much earlier in the process. This along with increased focus on automation of software testing has helped bring improvements. But at the same time there is increasing cost of software testing.

Here are the methods which help in increasing software testing coverage, make it more effective, all with controlled cost of building and maintenance.

Automation

Automation in software testing has existed for very long. It has enabled shifting the software testing earlier in the process. And ensured quality is built into the software rather than doing quality control at the end of process.

There are many tools (like robot framework, selenium …) available to automate testing of software functionality (via its backend apis or user interfaces), security, performance.

However, the cost of automating the software testing is high especially due to the need of significant effort from domain specialist to define what to test.

Artifical Intelligence

There has been increasing application of Artificial Intelligence (AI) to match human intelligence for advancements in various fields. AI can be used to provide insights that reduce human effort. And help avoid errors that increase cost of ownership.

In software testing, test definition or generation, requires domain specialist knowledge with corresponding effort and risk of errors. Lets look at how Data Analytics with AI sub fields help in reducing this effort and mitigating risk of errors.

Data Analytics

A key input to test definition is knowledge of individual users, users within a customer account or users overall. The domain specialist knows the users and how they use the software or a software feature.

Modern software are designed to collect data about

- users (who they are and how they use the software)

- efficiency of executing key business workflows

- business and technical events affecting the state of the software.

Applying data analytics, one could generate valueable inputs into test definition. Using Descriptive, Predictive data analytics one can find out user profiles, how they use a particular software feature or are going to use a software feature.

There are several tools that combined can be used for data analytics like Python, Apache Spark, Data Bricks. These along with data collected can provide inputs to test definition.

Natural Language Processing

In the process of test definition the domain specialists defines the scenario in natural language say english. This is understood and converted by a developer into an executable test.

In any software there exists

- canonical data model expressing entities, relationships and workflows of the domain.

- well defined apis (backend, user interface) enabling to interact with the software automatically.

Applying natural language processing techniques of text classification, machine translation and training via domain canonical data model, one could generate executable test from a scenario provided by domain specialist.

There are tools/platforms (like functionize, test.ai …) evolving that use such techniques for test generation, maintenance and even scaling testing.

Further to above there is on-going research like using genetic algorithm in the area of automatic test generation i.e. asking the machine to generate even the test scenario while domain specialist trains the machine.

Software quality is a key area of focus when developing a software. There has been advancement in software testing in the last decade, more rapid evolution is needed in refining approach, techniques and tools. This will allow software complexity and software test coverage grow at same pace.

Subscribe

For more such exciting content and allowing us to be part of your career journey